Designing tests

How do your students demonstrate that they have achieved the learning outcomes? How do you test in a fair (objective, valid) and reliable way? And how do you ensure that students do not just learn to pass a test, but also to further their development? This requires good test design.

Why?

In the first place, testing (formative and summative) provides students (and lecturers) with insight into and feedback on the learning process, the learning outcomes (feed up) and any possible adjustments to the learning pathway (feedforward). Click here to find out more.

Source: http://oabdekkers.nl/2017/08/15/bouwsteen-4-feedback/

Testing is therefore much more than just the conclusion of a module; it is an integral part of the learning process.

What?

Testing involves multiple moments at which students gain insight into their progress towards the learning outcomes or learning objectives. Testing is at the service of learning. Designing tests involves four steps:

1. Selecting test formats based on the learning outcomes

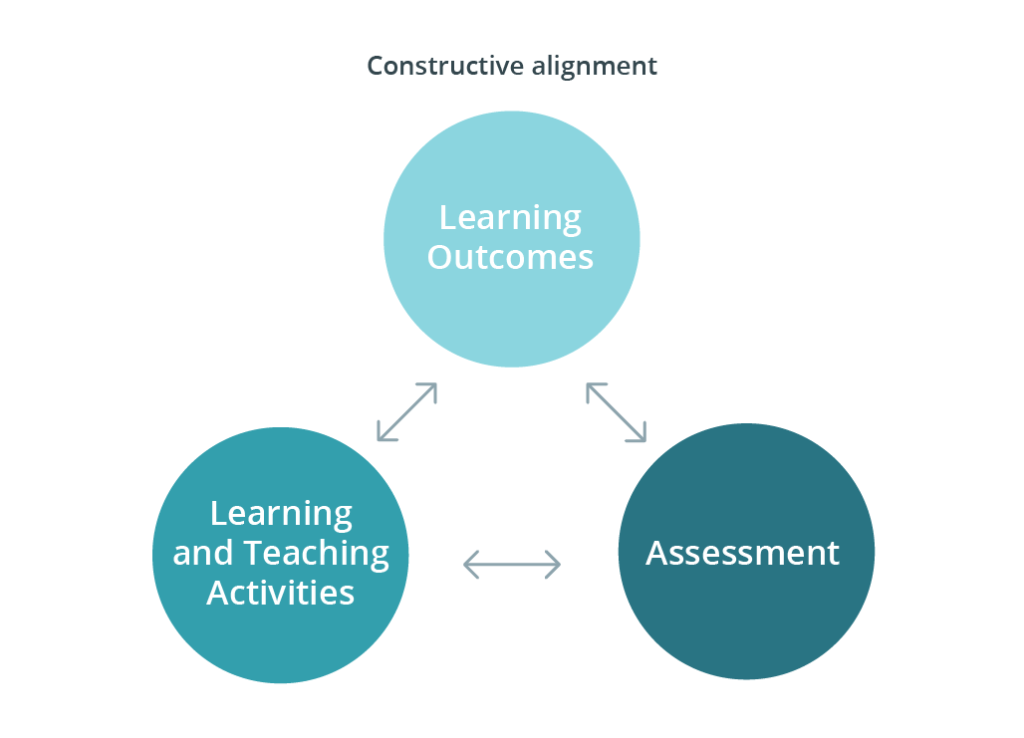

The learning outcomes guide the way education is organised, which includes test design and development. We call this constructive alignment.

This means testing is never separate from the intended learning outcomes, from feedback on development or from possible learning activities with which students can develop towards learning outcomes.

Students must have the opportunity to consider for themselves how they will demonstrate that they have achieved the learning outcomes. They must also be able to influence this. Depending on the target group, the academic year or certain practical considerations, their influence can be increased or decreased.

When you designed the learning outcomes you also decided on a cut-off score. What do students need to demonstrate in order to determine objectively that they have achieved the learning outcome? Complex learning outcomes probably include various components that can be tested: knowledge of certain theories and models, practical skills and cognitive or regulative skills such as self-reflection. You will use various formats to test these components. This will give students insight into their progress on these components before the overall learning outcome is tested, e.g. by making a professional product. All of these test formats can be applied formatively or summatively. Preferably, the overall learning outcome should be tested summatively, with a professional product and a behavioural assessment.

2. Setting criteria for testing

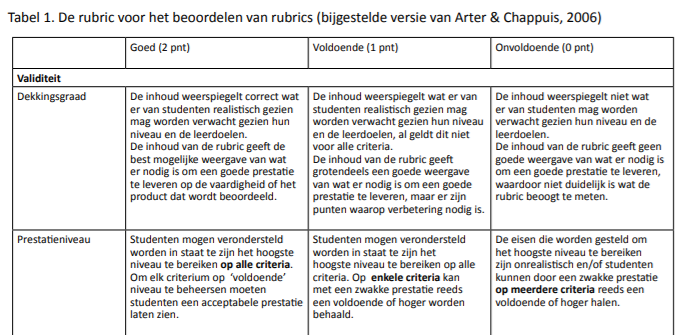

Start out by considering the conclusion: this also applies when designing education. Start with the assessment criteria for the learning outcome, or the summative testing of the final assessment(s). It may help to use rubrics (NL) for this.

Rubric-voor-rubrics-Van-Strien-Joosten-ten-Brinke-2016(NL)

Once it is clear where students are supposed to be at the end of the module, you can divide the learning outcomes into components that students will work on during the module. You can formulate learning objectives (NL) for these components and translate the objectives into assessment criteria for the various test formats. Working with assessment criteria (NL) supports the learning process of students in the following ways:

1) students develop more faculty-specific knowledge and greater confidence in their knowledge;

2) students develop greater confidence in and knowledge of the assessment of themselves and of others;

3) students practise their metacognitive skills;

4) students get more autonomy, giving them a stronger sense of task and other maturity.

Consider how you want to test: using a standard norm, in a development-oriented way or by comparing students to one another. None of these methods is necessarily better; each has its pros and cons. You can read more about this topic at score.hva.nl (NL)

In the case of assignments and knowledge tests with open questions, assessment forms can help ensure the assessment is reliable. With multiple-choice questions, it is useful to calculate marks using the cut-off score(NL); there are various ways of doing this.

3. Constructing tests

Once the test formats, learning objectives and assessment criteria are clear, you can construct the tests. Do this together with your team and a colleague who has the Senior Examiner Qualification (SEQ). When developing the tests, you can ask for advice from the Assessment Committee and the Examination Board. They also guarantee an overview of and balance between assessment formats in use at the degree programme. In this way, they can monitor the programme’s feasibility for students.

A complex learning outcome consists of various components. Students benefit if all of these components have been evaluated formatively before being tested summatively. To this end, it can be helpful to draw up a test blueprint, which allows you to see whether tests cover all aspects or learning objectives and whether the tests are fairly distributed across components or learning objectives. Verify whether your degree programme uses test blueprints.

4. Safeguarding test quality

By following the aforementioned steps, you have developed tests and assessment criteria. The final step is to assess and safeguard the quality of the various tests. Information about the results and evaluation is highly valuable for safeguarding, and where needed improving, the quality of education and of testing. Quality assurance, then, is a process. You assess the quality of tests individually and as a whole before administering them. In this phase, you discuss cases in which there is any doubt and organise calibration sessions to fine-tune the assessment among lecturers. On the basis of the results, you analyse the quality of the test and its components (see Phases 3, 5 and 7 in the Test Cycle illustration).

Source: https://buas.libguides.com/BKE/toelichtingopdetoetscyclus (NL)

Decide with your team how you will carry out this process. Ask colleagues from the Examination Board and from quality assurance to assist or to cast a critical eye.

The standard quality criteria for formative as well as summative tests are:

- learning function and feedback function – the test gives insight into academic progress and motivates students as they continue their learning process;

- validity – the test measures what should be measured;

- reliability – the test provides the same result in the same circumstances;

- transparency – the content and process are clear;

- accessibility – the test is accessible to all students; also, see if you can make the test location-independent.

For each test format, there are different questions you can ask to analyse the test. More information is available at score.hva.nl.

Formative tests are intended to help students understand where they are in the learning process. Accordingly, the quality requirements for these tests are comparable to those for summative tests. Few things are more frustrating to students than a ‘practice test’ that does not resemble the real test.

How?

At score.hva.nl, you will find many examples and resources for designing tests.

- Brightspace offers a range of ways for students to submit work and for you to check this work for plagiarism, for example.

- In Brightspace, you can construct a digital knowledge test with question types. You can also cooperate with colleagues to create an item database, enabling students to practise with many questions.

- Students can use FeedbackFruits to give peer feedback.

- Students can submit computer scripts in CodeGrade.

- You can find everything about formative assessment in the Formative Assessment Toolkit (in Dutch).